Why AI Risk Management Should Be Your Next Strategic Priority

How leading organizations are turning AI governance into a competitive advantage—while protecting their people and their reputation

The AI revolution isn’t coming; it’s already here. And if you’re like most HR leaders, you’re probably fielding questions from your board, your CEO, and your teams about how to harness AI’s potential while keeping your organization safe.

According to Gartner’s 2025 Cybersecurity Innovations Survey, a whopping 81% of organizations are now on their GenAI adoption journey. Yet the same survey revealed rising project failures, compliance issues, and AI misuse incidents stemming from inadequate governance.

The organizations winning with AI aren’t just the ones adopting fastest; they’re the ones managing risk smartest. Let’s explore what AI risk management actually means and why it deserves a prominent spot on your strategic agenda.

What is AI Risk Management?

AI risk management is a systematic process that identifies, assesses, mitigates, and controls the unique risks associated with artificial intelligence systems—such as algorithmic bias, data privacy concerns, explainability challenges, and security vulnerabilities—throughout their entire lifecycle, ensuring safe, ethical, compliant, and responsible AI use.

Think of it this way: traditional risk management is like having guardrails on a highway. AI risk management is like having guardrails on a highway where the road itself is constantly changing shape, new lanes appear overnight, and sometimes the cars make their own decisions about where to go. The fundamentals are the same—identify risks, assess impact, implement controls—but the dynamic, autonomous nature of AI systems requires a fresh approach.

According to McKinsey’s State of AI 2025 report, organizations are now working to mitigate an average of four AI-related risks, up from just two in 2022. The risks have multiplied because AI touches everything: your hiring processes, your employee development programs, your workforce planning, and increasingly, the daily decisions your people make.

Why AI Risk Management Matters for Your Organization

Protecting Your Workforce and Reputation

Your people are watching how you implement AI. A Deloitte survey found that regulatory uncertainty and risk management have risen to become top barriers for organizations deploying GenAI. When AI systems make biased recommendations about who gets promoted or which candidates get interviewed, the damage extends far beyond legal liability—it erodes the trust you’ve spent years building with your workforce.

The reputational stakes are real. One high-profile AI failure can undo years of employer branding work. Conversely, organizations that demonstrate thoughtful, responsible AI adoption often find it becomes a competitive advantage in talent acquisition.

Ensuring Regulatory Compliance

The regulatory landscape is shifting rapidly. The EU AI Act is now in effect, and PwC’s analysis suggests that federal policies are increasingly shaping corporate norms around AI governance. In Deloitte’s research, worries about complying with regulations jumped 10 percentage points year-over-year to become the top barrier to GenAI deployment.

For CHROs, this isn’t abstract policy talk; it directly affects how you can use AI in recruiting, performance management, and workforce planning. Getting ahead of compliance requirements now means avoiding painful remediation later.

Building Employee and Stakeholder Trust

Trust is the currency of the AI era. PwC’s 2025 Responsible AI Survey found that nearly 60% of executives say responsible AI boosts ROI and efficiency, while 55% report improvements in customer experience and innovation. The organizations seeing these benefits aren’t treating governance as a checkbox; they’re making it a competitive differentiator.

When employees understand that AI systems affecting their work lives are governed thoughtfully and transparently, engagement improves. When boards see robust risk management frameworks, they’re more willing to approve transformative AI investments.

Enabling Safe Innovation and Competitive Advantage

Good AI governance doesn’t slow innovation; it accelerates it. McKinsey’s research on agentic AI shows that agentic AI systems could help unlock $2.6 trillion to $4.4 trillion annually in value. But capturing that value requires embedding security and governance from the start, not bolting it on afterward.

Organizations with clear AI guardrails can move faster because they don’t have to pause for ad-hoc risk assessments on every project. They can experiment more boldly because they know their governance framework will catch potential issues before they become crises.

Key AI Risks Business Leaders Must Address

Security Risks in AI Systems

AI systems introduce attack vectors that traditional cybersecurity wasn’t designed to handle. Gartner reports that AI-enhanced malicious attacks have been the top emerging risk for enterprises for multiple consecutive quarters. From prompt injection attacks to model manipulation, the threat landscape is evolving faster than many security teams can adapt.

For HR systems specifically, this means protecting sensitive employee data, ensuring AI-powered recruiting tools can’t be gamed by bad actors, and maintaining the integrity of decisions that affect people’s livelihoods.

Ethical and Legal Risks

Algorithmic bias isn’t a theoretical concern; it’s a litigation risk. AI systems trained on historical data can perpetuate or amplify existing biases in hiring, promotion, and performance evaluation. Deloitte’s AI governance research identifies unethical use and bias among the key risks that can pose reputational consequences and erode stakeholder trust.

Operational and Decision-Making Risks

When AI systems hallucinate or produce inaccurate outputs, business decisions suffer. According to McKinsey, nearly one-third of all respondents reported consequences stemming from AI inaccuracy. In HR contexts, this could mean flawed workforce planning projections, incorrect skills assessments, or misleading analytics that drive poor strategic decisions.

Compliance Risks

The regulatory environment is fragmenting rapidly. Different jurisdictions have different requirements for AI transparency, fairness testing, and human oversight. Deloitte’s State of Generative AI in the Enterprise survey found that difficulty managing risks increased 6 percentage points year-over-year, reflecting the growing complexity of the compliance landscape.

Reputational and Trust Risks

Trust is fragile. A single AI-related incident—a biased hiring algorithm exposed in the press, a data breach through an AI system, or an embarrassing chatbot failure—can damage your employer brand and stakeholder relationships. Organizations that proactively communicate their AI governance approach build resilience against these risks.

AI Risk Management Frameworks

You don’t have to build your AI risk management approach from scratch. Several established frameworks provide structured approaches that have been validated across industries.

NIST AI Risk Management Framework (AI RMF)

The NIST AI RMF has emerged as a leading framework for U.S. organizations. It organizes AI risk management into four core functions:

- Govern: Establishing a culture of AI risk management with clear accountability and policies

- Map: Identifying and contextualizing AI risks across your specific use cases and environment

- Measure: Assessing and quantifying AI risks using appropriate metrics and methodologies

- Manage: Prioritizing and mitigating AI risks based on their potential impact

ISO/IEC 23894:2023

This international standard provides guidance for AI risk management across the entire AI lifecycle, from conception through deployment and retirement. It’s particularly valuable for organizations operating globally who need a framework that transcends regional regulatory differences.

ISO/IEC 42001:2023

The first global AI management system standard, ISO 42001 helps organizations establish, implement, maintain, and continually improve their AI management systems. Deloitte notes that organizations should retain evidence like AI model design requirements, performance monitoring logs, and data audit trails to demonstrate sustained compliance.

Implementing AI Risk Management in Your Organization

Establishing Governance and Accountability

Clear ownership is essential. PwC’s research shows that 56% of executives say their first-line teams—IT, engineering, data, and AI—now lead responsible AI efforts. But effective governance requires a three-lines-of-defense model: first line builds and operates responsibly, second line reviews and governs, third line assures and audits.

For CHROs, this means ensuring HR has a seat at the AI governance table; not just as a stakeholder affected by AI decisions, but as an active participant in shaping how AI is deployed across the workforce.

Building Cross-Functional Risk Teams

Gartner predicts that by 2028, 25% of large organizations will have dedicated AI governance teams, up from less than 1% in 2023. These teams should bring together diverse perspectives: technical experts who understand model behavior, legal and compliance professionals who navigate regulatory requirements, HR leaders who champion employee interests, and business stakeholders who define acceptable risk tolerances.

Creating AI Use Policies

Policies should be specific enough to guide behavior but flexible enough to accommodate rapid technological change. Deloitte’s research on shadow AI highlights that unsanctioned AI deployments by individual teams create governance blind spots. Clear policies help channel innovation into governed pathways while maintaining the agility employees need.

Training Leaders and Workforce

AI literacy is becoming a core competency. According to McKinsey’s workplace AI report, employees’ top concerns about AI are cybersecurity, privacy, and accuracy. Training programs should address these concerns directly while building the skills people need to work effectively alongside AI systems.

Monitoring and Continuous Improvement

AI risk management isn’t a one-time project; it’s an ongoing discipline. Gartner’s TRiSM framework emphasizes the need for continuous governance, monitoring, validation, testing, and compliance. As AI capabilities evolve and new use cases emerge, your risk management approach must evolve with them.

Taking the First Step

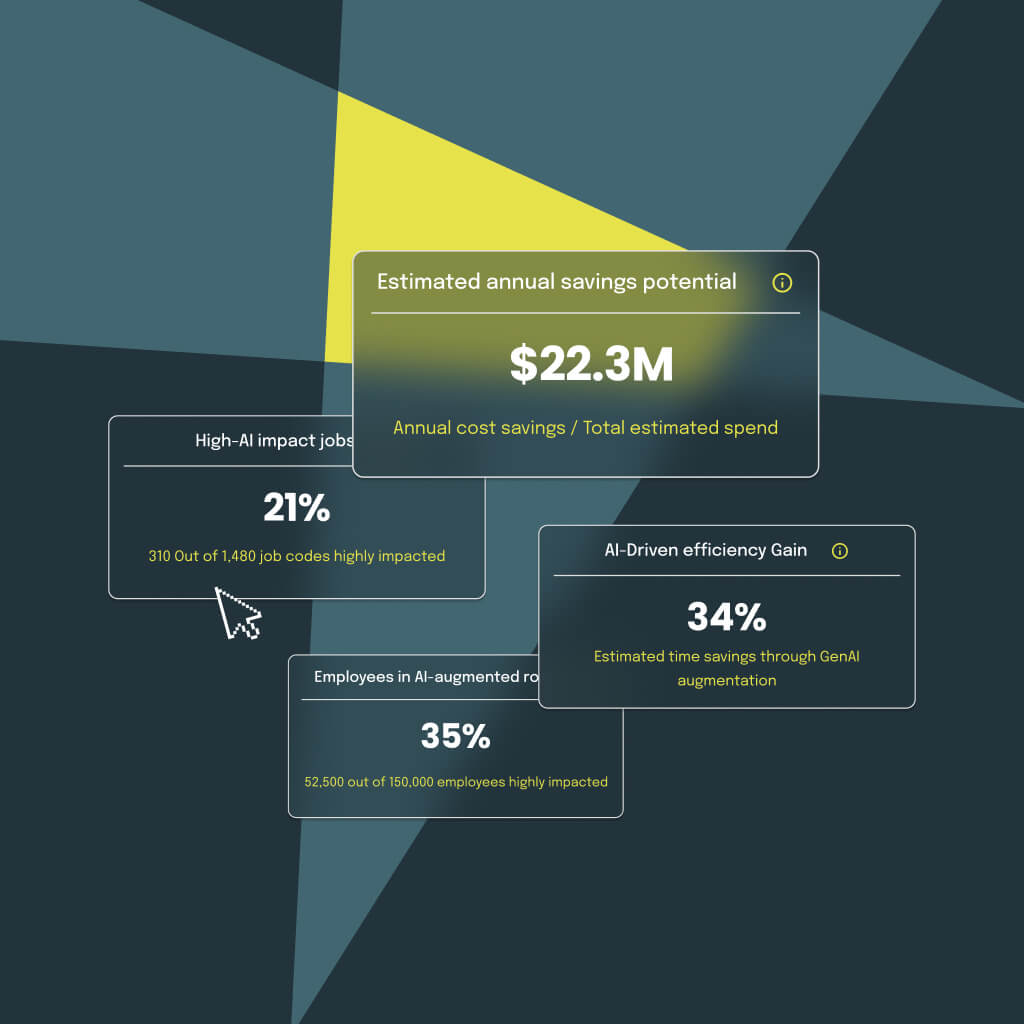

The path to effective AI risk management starts with visibility. You can’t manage risks you can’t see, and you can’t optimize a workforce transformation you can’t measure. That’s where data-driven insights become essential.

Gloat Signal provides the workforce intelligence CHROs need to navigate AI transformation with confidence. By analyzing how AI will impact specific roles and tasks across your organization, Signal helps you identify where to invest, which roles are at risk, and how to proactively reskill and redeploy your people. Instead of flying blind through AI transformation, you get a clear, data-driven roadmap for managing change while managing risk.

The organizations that will thrive in the AI era aren’t just the ones adopting fastest; they’re the ones managing risk smartest while empowering their people to adapt and grow. Try Gloat Signal and discover how workforce intelligence can transform your approach to AI risk management—turning uncertainty into strategic advantage.